Abstract

Technology today is at a crossroad between Industry 4.0 and 5.0, where products and services are manufactured and designed to be ‘human-centered’ and sustainable. Such objectives have always been expected in education, especially in the way the workforce is trained. Leveraging on prior research which was done by a team from Pasadena City College (PCC) and UC Irvine, we have formed a bi-coastal collaborative, including Mercer County Community College (MCCC) and Princeton University, to expand and transcend our earlier AI-powered virtual reality (VR) simulation framework and platform, AI-powered digital twin (DT/AI) for education. This Phase-2 effort demonstrates a working, multi-institution R&D platform with the following capabilities: (a) new training modules with enhanced lithography training and advanced device packaging, (b) better optimized, high-fidelity equipment emulations and process simulations that much closely replicate the physical equipment, (c) adherence to documented facility-specific Standard Operation Procedure (SOP) and manufacturing process flow, (d) a parallel fabricated chip-under-test and its test board for I/O connectivity verification, and last but not the least, (e) a tightly-coupled agentic AI engines available throughout the training session to customize learner-centric experiences to enhance individual knowledge acquisition and retention. The authors also continue to demonstrate the feasibility and scalability benefits of foundational VR/AR-based training for students, technicians, and up-skill learners for whom direct cleanroom or packaging lab access is often unattainable; while with DT/AI, learning and feedback is affordable and widely accessible.

Keywords: digital twin, VR/AR simulation, artificial intelligence, agentic AI, semiconductor technology, simulation-based training, advanced device packaging, photolithography

© 2026 under the terms of the J ATE Open Access Publishing Agreement

Introduction

The surge in domestic semiconductor fabrication has placed unprecedented pressure on the workforce pipeline, with industry analyses indicating a large shortfall in highly trained, skilled technicians and engineers by the decade’s end [1]. Conventional instructional approaches, anchored in limited cleanroom access, costly equipment, and scant expert-led workshops, are unable to scale rapidly or provide the immersive, hands-on practice essential for procedural and skill mastery needed by manufacturing technicians.

Building on earlier research from the West Coast team and a pilot study conducted at PCC [2], which demonstrated that undergraduate and high school students are capable of developing and evaluating VR/AR simulators to train on UV lithography, we have broadened the effort to include Digital Twin technology [3]. Unlike conventional simulations, which replicate outward behavior, Digital Twin (DT) technology also emulates the inner workings of physical systems. The West Coast team expanded training content for chip fabrication front-end modules, while the East Coast partner developed equipment usage and workflow training for advanced device packaging. Each site integrated AI-driven feedback agents to offer individualized, real-time hints and corrective guidance. To ensure consistency across institutions, our bi-coastal team also established and followed common development guidelines, summarized as the TC4 approach for capturing, creating, cataloging, and customizing training content.

DT along with AI (DT/AI) are two of the key pillars in Industry 4.0 and 5.0 advancements, where 5.0 emphasizes the “smartness” of the technology. While prior efforts have explored VR-based visualization or single-institution simulations, access to authentic cleanroom training remains constrained by cost, safety requirements, and limited facility availability. These constraints disproportionately affect early-stage learners, motivating the need for a scalable training framework that allows repeated, guided practice before physical cleanroom entry.

We validated the DT/AI platform through live demonstrations with students, faculty, and cleanroom specialists, collecting qualitative observations on improved DT/AI literacy, task fluency, spatial reasoning, and trainee confidence. This collaborative model, uniting diverse institutional strengths and sharing a streamlined DT/AI tool platform and ecosystem, accelerates DT/AI development, cultivates best current practices, and lays the groundwork for broader adoption beyond the current two bicoastal teams. By combining institutional expertise and technological resources, the platform demonstrates adaptability to varied curricular requirements and resource constraints, offering a scalable model for the creation and dissemination of DT/AI-based training content and instructional best practices.

The goals of this study and development are to (1) demonstrate the value of a comprehensive, DT/AI based training environment for semiconductor workforce; (2) verify its adaptability across institutions with differing resources and curricula; and (3) establish an open framework that invites additional community colleges and universities across the nation to contribute, extend, and benefit from this shared DT/AI infrastructure and collective knowledge base. The following sections detail our DT/AI co-design and development methodology, module implementations, findings from trial sessions, and recommendations for scaling immersive training content development across the nation for semiconductor workforce training.

Digital Twin-Based Education System

The Systems Engineering Book of Knowledge defines a digital twin as a “high-fidelity model of the system which can be used to emulate the actual system” [4]. This concept has been widely adopted across industries. Educational digital twins coupled with AI engines, increasingly used in virtual learning environments, intelligent teaching, and learner analytics, do not primarily model or predict the future physical state of a system; instead, they support the creation of risk-free training spaces and the analysis of learner behavior and performance. Consequently, the data informing educational digital twins differ in scope and depth, consisting largely of standard operating procedures, equipment specifications, and quantitative learner data rather than continuous sensor-driven physical measurements, and learner sentiment and outcome. This contextual distinction is reflected in prior literature proposing dynamic, application-dependent classifications of digital twins; under such frameworks [5], the DT/AI system presented in this work is categorized as an ‘analytical digital twin operating under human oversight’, with non-automated data exchange due to equipment sensitivity and safety considerations. The proposed framework is parametric, allowing developers to define the frequency of data synchronization, and accommodates periodic, non–real-time updates from heterogeneous data sources (e.g., images, video, SOPs, and learner data), consistent with prior findings that educational and training-oriented digital twins often operate with intermittent data flow, with humans in the loop, rather than continuous real-time system integration.

Partner Facility Overview

A key limitation of many immersive training systems is their reliance on site-specific content that cannot be easily reused or extended. By intentionally developing and validating DT/AI modules across geographically and operationally distinct facilities, this work demonstrates how standardized asset libraries and workflows can support interoperable training content spanning both front-end fabrication and back-end packaging.

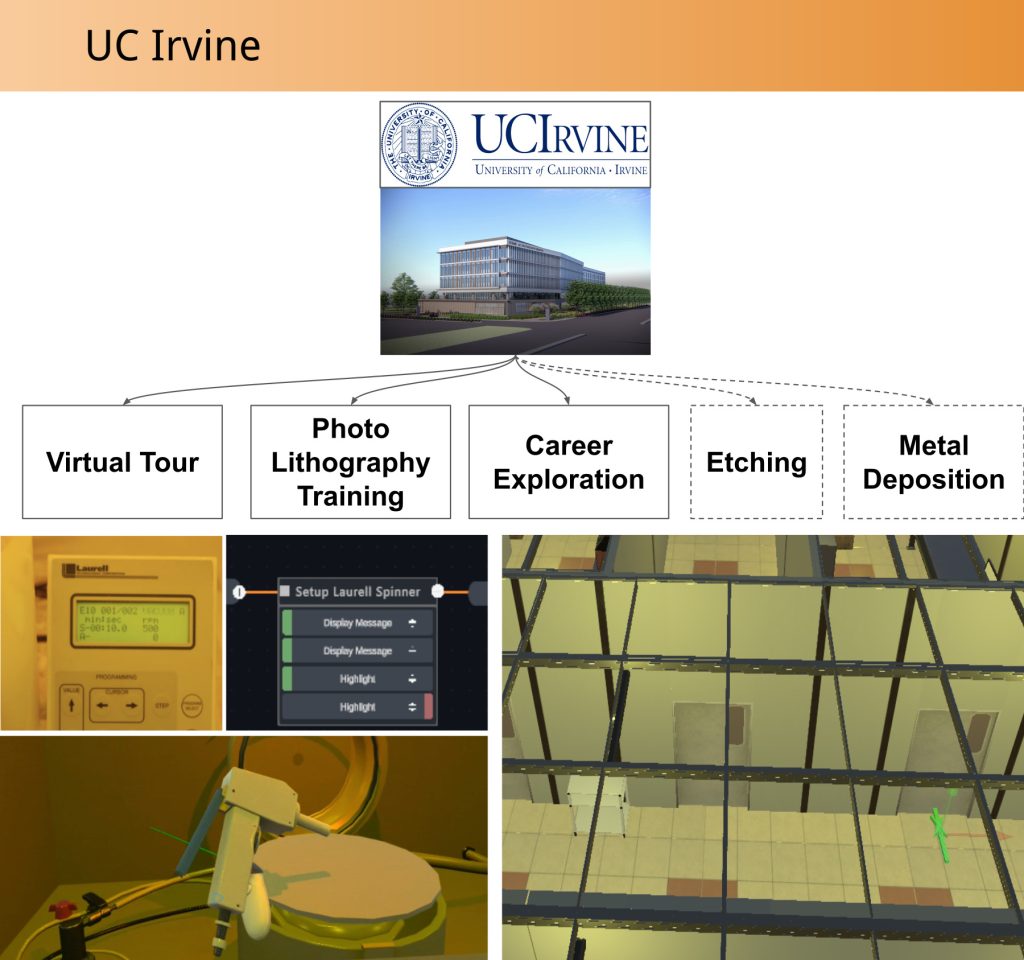

West Coast Facility (UC Irvine INRF)

The Integrated Nanosystems Research Facility (INRF) at The California Institute for Telecommunications Technology at the University of California, Irvine is a Class 1,000/10,000 research cleanroom occupying approximately 9,600 ft² [6]. It features a multi-stage gowning area, interlocked airlocks, and HEPA-filtered ventilation, achieving over 350 air changes per hour. This tightly controlled environment supports a range of front-end processes. We focused on UV lithography using a Karl Suss MA6™ contact mask aligner, metal deposition with a E-Beam™ 1 evaporator (Temescal CV-8), and deep-reactive ion etching via an STS DRIE™ system. Supporting photoresist handling and chemical treatments are managed at Laurell™ photoresist spin coater stations and adjacent wet benches.

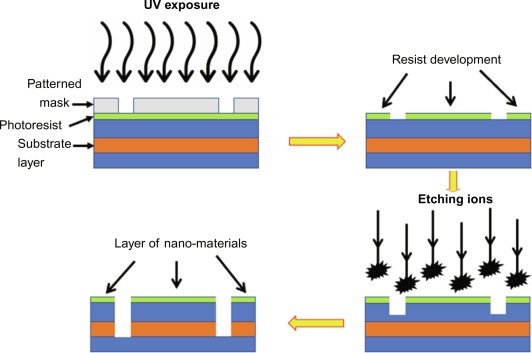

Over the course of a month, INRF staff conducted workshops during which our team systematically documented the facility and its process. High-resolution photographs and videos of control panels, chamber interiors, and wafer-handling workflows were captured to align real equipment layout. Floor-plan schematics were also obtained to preserve exact equipment placement, enabling accurate spatial correspondence between the physical cleanroom and its digital twin representation. These documented fabrication steps, including UV exposure, resist development, etching, and material deposition, are summarized schematically in Figure 1, which illustrates the front-end photolithography workflow used to train students on INRF microfabrication processes.

East Coast Facility (Advanced Micro Assembly and Packaging (AMAP) Lab, Princeton Materials Institute)

The Advanced Micro Assembly and Packaging (AMAP) Lab is a core facility of the Princeton Materials Institute (PMI), Princeton University [7]. The AMAP facilitates a wide range of post-fabrication services essential to semiconductor device packaging. It provides access to dicing and singulation, planarization, and different bonding techniques such as wedge bonding, ball bonding, and flip-chip bonding. The Lab also supports precision packaging, heterogeneous integration, and back-end prototyping within semiconductor research and education.

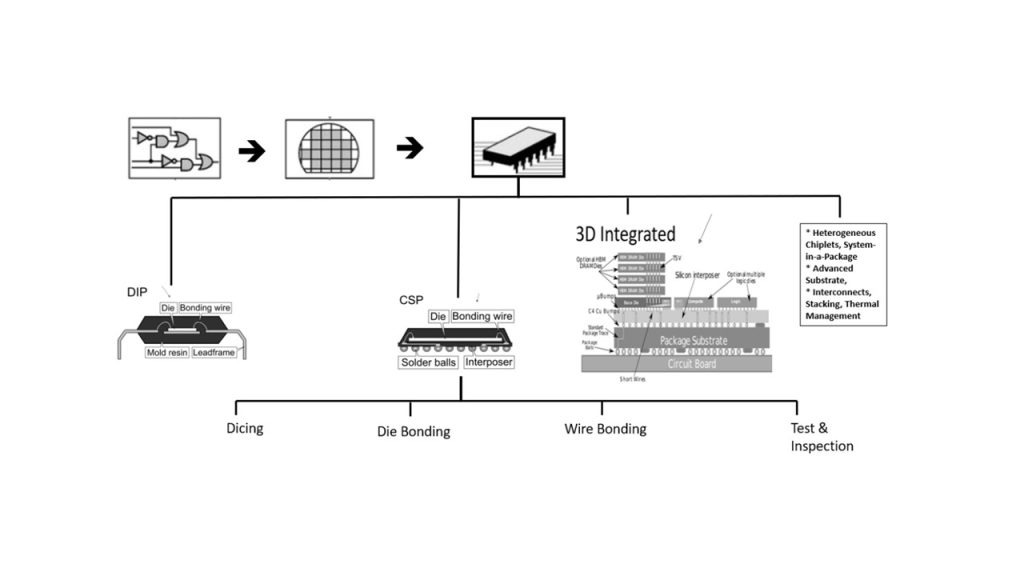

Over the span of six months, AMAP staff provided access to equipment and tools such as the ADT-7100™ dicer, the Tresky™ flip-chip bonder, and the Delvotec™ wire bonder to learn Chip-Scale Packaging (CSP), as highlighted in chip packaging evolution in Figure 2 below, complete with a series of guided tours and tutorials. The lab features ESD-shielded workstations, optical inspection tools, and thermal control systems, offering a highly controlled environment for handling and bonding operations. Beyond observational access, select tools were operated under supervision to carry out key procedures such as die alignment, bonding, and inspection. Notably, unlike front-end chip fabrication, certain packaging operations do not require full cleanroom bunny suits.

System Design and Development

Unlike prior VR cleanroom training tools that focus primarily on visualization or isolated procedures, the proposed framework combines high-fidelity digital twins with an agentic AI tutor that actively monitors learner state and task progression. This integration enables not only realistic equipment interaction, but also adaptive guidance, error correction, and contextual explanation during training.

Multi-Institution Collaboration Guidelines

The common goal for the partner institutions is to develop an immersive and interactive 3D VR training simulation of key semiconductor fabrication processes, including photolithography and advanced packaging, for deployment on web and virtual reality headsets. To start with, we defined a joint guideline to use common software framework, platform, and tools, follow similar software development methodologies, maintaining and accessing a common 3D model library repository, and execute using similar project development phases so that the resultant DT/AI output are seamlessly integrated to provide trainees with smooth learning experience. The teams also cross-checked and provided feedback on each’s DT/AI outputs.

Development Details (Methodology: Platform, Flow, and Tools)

Authoring Platform

DT/AI content was authored using the HyperSkill platform [8], which leverages the Blender [9] and Unity [10] game engines for 3D modeling and rendering, AWS S3 cloud services [11] for asset repository and delivery, and OpenAI [12] services driven text generation for dynamic instructional prompts, agentic support, and development co-piloting. A web‑based version was produced in parallel to accommodate learners who experience VR discomfort or when web-access is readily available.

Flow and Tools

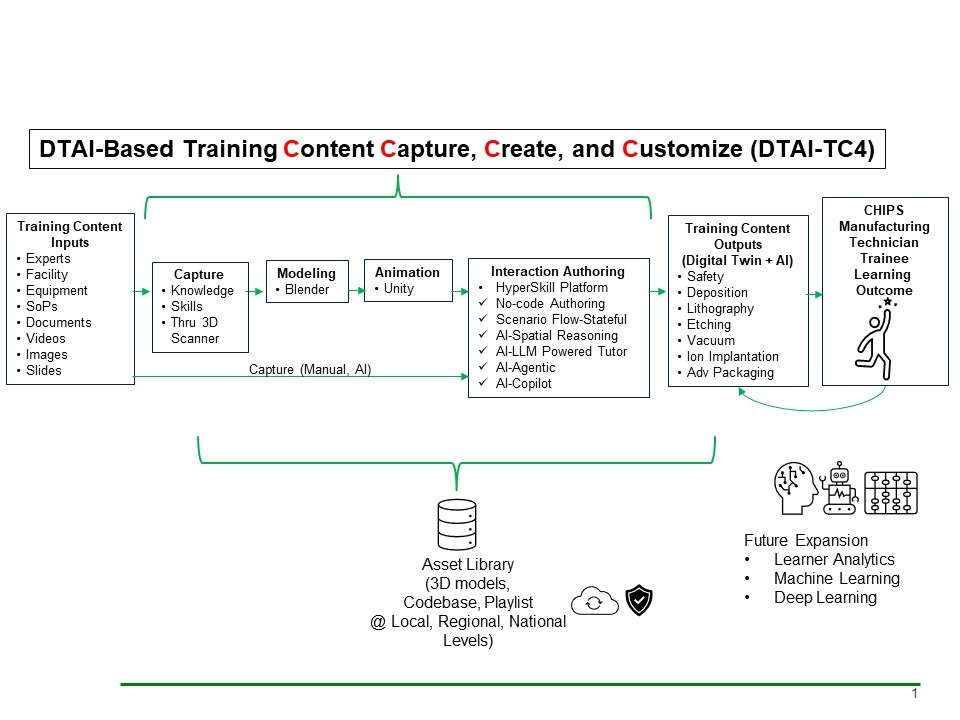

The flow diagram below, Figure 3, depicts the inputs, outputs, and intermediate tool applications, some open-source, some commercial, of essentially achieving training content capture, creation, categorization, and customization that supports immersive virtual human-machine interaction for training purposes.

Content Source Capturing

Our team first captured workflows by documenting careful photographs and videos of the facility, equipment, tool usage, interfaces, and work holding practices. We collected annotated standard operating procedures (SOP) detailing critical process parameters, such as dicing feed rates and spindle speeds, die attach alignment tolerances, and bonding force profiles. Laboratory layout dimensions and equipment placement were measured to support accurate 3D model reconstruction. Cell-based cameras and 3D scanners were utilized to capture specific equipment details and measurements.

3D Object Modeling Using Open-Source Software Tool: Blender

We then convert the captured references into interactive virtual environments. We use Blender software to reconstruct equipment/tool geometries and the cleanroom floor plan. Modeled assets and layout data were then stored in a 3D model library repository. These collected and shared assets served as the authoritative blueprint for VR module development. For some equipment, the facility staff provided CAD model files available from the equipment supplier. Such CAD models are often overly detailed in their use for DT purposes and their excessive number of polygons or faces needed to be ‘minimized’ specifically for DT content creation, memory space and runtime considerations. Therefore, polygon minimization techniques are often applied to CAD models obtained directly from the equipment suppliers. Polygon minimization consumes time, so it is a tradeoff between creating a model from scratch or minimizing a model from the original CAD model. Open-source software such as Instant Mesh can assist in this process by automatically reducing polygon count, providing a practical alternative to fully manual optimization.

3D Animation Using Open-Source Software Tool: Unity

The Blender models are then imported into the Unity engine, where control-panel interfaces, process animations, and safety interlock logic were implemented. Unity is where we build simulation sequences based on subject matter expert’s demonstrations, SOPs, and/or user tasks. Die singulation, alignment and bonding, and post-bond inspection are example simulated sequences, with technician feedback triggered by common errors like misplaced bond or tool misuse settings. All layout and interface logic were tested with human experts to ensure space and procedure accuracy.

By importing true-to-scale geometry, control-panel layouts, and SOP-derived timing directly into Unity, we also achieved centimeter-scale spatial fidelity and accurate process sequencing. Iterative walkthroughs with lab facility technicians and staff validated each module’s precision and immersion, and realistic failure-mode scenarios were incorporated to support comprehensive, risk-free what-if condition training in core photolithography and device packaging procedures.

3D Animation with Interactions using Commercial Software Tool: HyperSkill by SimInsights

We imported our Unity scene (cleanroom layouts and animated equipment) into SimInsights’ HyperSkill [13] and bound user interaction and system responses of those assets using Scenario Flow, a visual state-machine editor. Scenario Flow lets authors define states, actions, attributes, and transitions with branching logic, so the module progresses step-by-step identical to the SOPs.

Methods

Content Collection

The project began with fabrication site visits to document cleanroom and packaging workflows. At UC Irvine, the focus was on cleanroom and lithography-specific procedures, while at Princeton the emphasis was on advanced packaging techniques. Data collected across both sites included high-resolution photographs of room layout and equipment details, as well as video recordings of procedures and safety protocols, including full walkthroughs of the respective processes. At Princeton, 3D scanning was also used to capture detailed geometry of fabrication machinery to supplement photographic references and support asset development. Standard operating procedures (SOPs) and related procedural documentation for the equipment were additionally gathered. This body of material became the foundation for accurate and immersive content development and informed subsequent simulation design decisions.

Asset Creation

Asset creation and cataloging proceeded iteratively throughout development. The team imported and created 3D models of each cleanroom’s equipment and machinery, consolidating and customizing a 3D asset library containing over 100 laboratory objects and lab facility or cleanroom layouts. The team utilized the image, video and documentation content to create high-fidelity models of many cleanroom items, primarily using modeling softwares such as Unity and Blender to carry out the asset design. Assets requiring motion, particularly machinery, were imported into Unity and animated to capture realistic operational behavior, while static objects were left unanimated. All assets were optimized for real-time performance and enhanced with realistic textures to maintain visual fidelity across platforms. Iterative cataloging allowed the asset library to grow steadily while ensuring consistent quality and performance.

With the asset library complete, each team developed a virtual space by using layout data of each fabrication facility. The virtual space includes ambient and accent lighting. Once the virtual space was completed, the team placed assets within the virtual space according to their locations within the respective facility, and the layout was validated using video data recorded from process walkthroughs. The spatial layout provided the basis for defining instructional sequences, and integrating spatial awareness into the AI model.

Storyboard Creation and Instructional Scripting

Storyboards (sequences of action and dialogue items linked by user-triggered transitions) were developed to define learning objectives and process sequences across both fabrication and packaging workflows. For front-end processes, this included wafer preparation, spin coating, mask alignment, UV exposure, development, and post-exposure bake. For packaging, the steps of die attachment, wire bonding, and underfill application were identified. The storyboard also outlined a sequential flow of user interactions, system responses, and optional branching scenarios designed to address common errors or alternative workflows. The storyboards and instructional scripts were created from SOP and process walkthrough data collected from each team’s visits to their respective facilities and validated by domain experts.

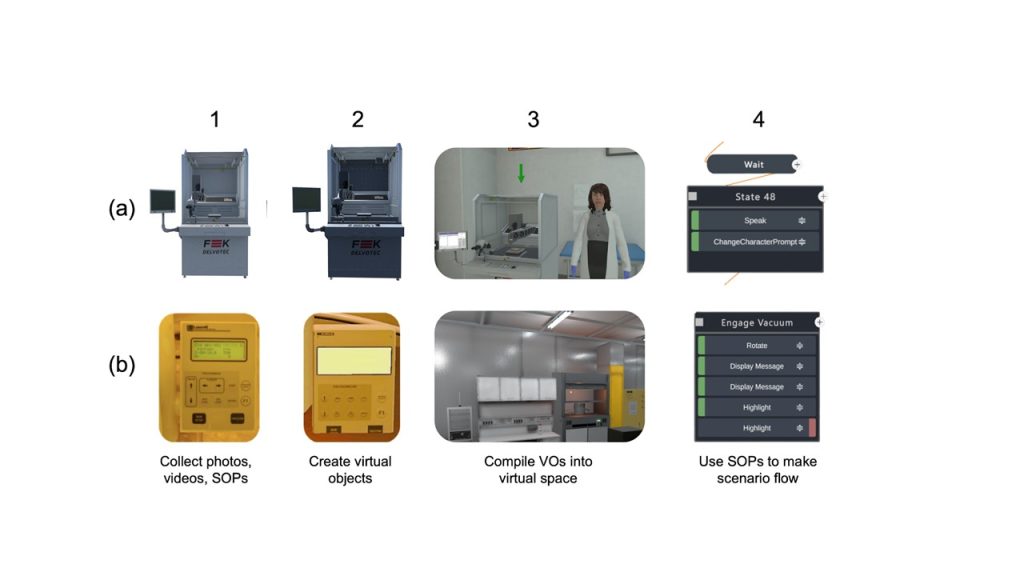

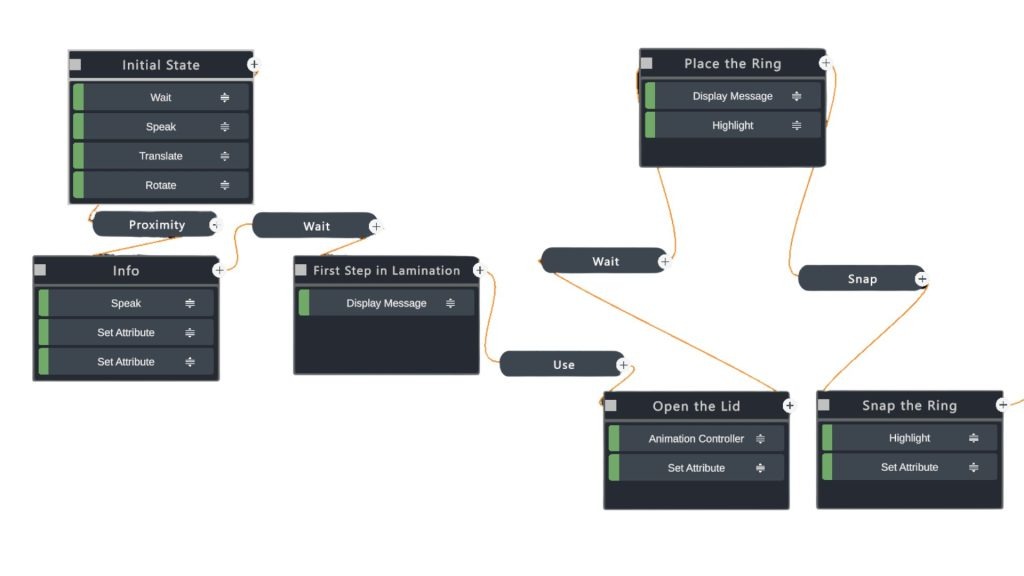

The simulation’s behavior was defined using the Scenario Flow editor present within the development platform. A state node (as shown in column 4 of Figure 4) was created for each instructional step and configured with entry prompts, expected input conditions, and feedback rules. Transitions were added between nodes and set to trigger only when the learner’s action met validation criteria, ensuring that progression occurred strictly in sequence and at a pace dictated by the user’s proficiency level.

In parallel, the storyboard was expanded into a detailed instructional script. Each step specified three elements: an on-screen prompt with learner instructions, the expected user action (ex: “place wafer on chuck and engage vacuum”), and system feedback providing confirmation of correct actions or context-sensitive hints. This scripting phase ensured clarity, technical precision, and consistency with defined learning objectives. Within the Scenario Flow, as seen in Figure 5, user triggers such as “Use,” “Snap/Unsnap,” “Proximity,” and “Grab/Release” were mapped to specific equipment responses. For example, pressing a virtual button on the die bonder fires a “Use” trigger that executes a “Play Animation” action on the corresponding mechanism. Picking up a wafer and placing it onto the stage uses hitboxes combined with the “Snapped” attribute to validate correct placement before advancing. Rich training media and instructional checks were embedded throughout the scenario flow. Boolean gating functions (ex: if quiz_score ≥ 70) are handled using “Conditions” on transitions. The “Play Video” action anchors video content to in-scene monitors or as anchored overlays during critical steps, while “Quiz” actions introduce scored knowledge checks at decision points. These elements reinforce procedural understanding while maintaining learner engagement.

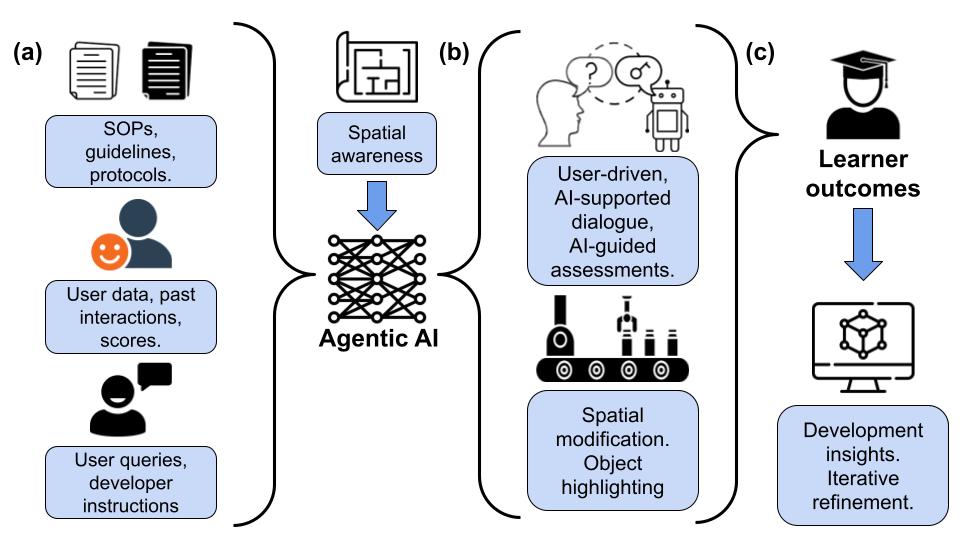

AI Implementation

Recent studies on artificial intelligence, particularly the use of large language model (LLM)-powered agentic systems, have demonstrated effectiveness in supporting educational needs across disciplines and learner age groups [14]. Agentic artificial intelligence is defined as a model, typically a large language model, that possesses a degree of autonomy and independence, with the ability to interact with its environment and the user by taking actions or completing tasks. This capability extends beyond simple search-and-response behavior, due to high generalizability and the ability to personalize responses based on learner context. Figure 6 illustrates the role of the AI agent within the DT/AI-based education framework and its interactions with the user and the simulated environment. User-provided and developer-defined data, together with fab-specific data taken in by the digital twin at an author-specified frequency, constitute the inputs to the AI agent and inform its decision-making processes. Based on these inputs, the AI agent formulates task-specific goals and engages the learner through SOP-informed dialogues, visual highlighting of task-relevant objects, and limited modifications to the spatial environment intended to support learning task completion. Learner outcomes are subsequently evaluated using in-platform analytics services, complemented by qualitative user feedback and quantitative measures such as statistical analysis of survey data, as demonstrated in prior work [3]. These aggregated insights are then used by authors and developers to iteratively refine the simulation, thereby establishing a feedback loop that exemplifies information exchange between the physical world and digital twins, mediated through user-centered and AI-guided interactions for learning.

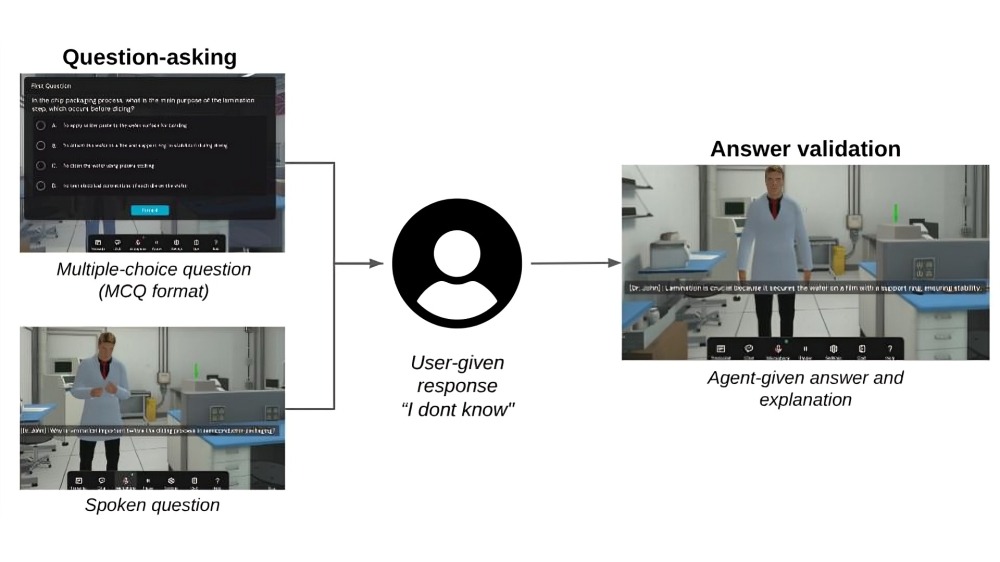

Within the DT/AI environment, the agent continuously builds contextual understanding from SOP documents, information available online, the history of actions performed by the user, and ongoing user queries and dialogue. Informed by this information, the agent takes actions that help the learner understand central concepts through both direct dialogue, such as open-ended question-and-answer interactions (as shown in Figure 7), and indirect interactions, such as 3D object highlighting. As depicted in Figure 6, the agent’s spatial awareness allows it to account for the three-dimensional location of objects relevant to the current step or task. This enables object-specific dialogue, targeted highlighting, and spatial guidance, allowing learners to ask questions about particular machines and receive visually grounded explanations.

The AI tutor continuously monitors learner performance, identifies deviations from the expected workflow, and adapts its responses in real time to address misunderstandings. All interactions are recorded for analytics, enabling educators to review question-and-answer patterns and refine future instruction.

ChatGPT-4 is embedded in the AI agent system, allowing learners to enter prompts, ask questions, and receive responses to reinforce learning in a manner similar to a contextualized search engine. Building on this capability, an LLM-powered AI copilot functions as a context-aware assistant embedded within the simulation environment. By leveraging HyperSkill’s state-based Scenario Flow, as shown in Figures 5 and 6, the agent can identify which state is being executed at any given moment.

When learners encounter difficulties or become stuck, the AI tutor recognizes the current state and provides immediate, context-sensitive, and user-personalized guidance. This may include simplifying procedural explanations or suggesting corrective actions aligned with the instructional design. The tutor’s state-tracking and spatial guidance capabilities reduce learner frustration while maintaining progression through complex procedures.

Cross-Platform Deployment and Testing

Once every node and transition had been fully mapped and tested for logical consistency, we generated incremental software builds for both the VR headset and the web browser to begin comprehensive unit and functional testing of each specific build.

The platform allows the simulation to be built for both immersive VR and standard web browsers to maximize accessibility. Because the underlying platform supports multi‑device deployment without modification, the same interactive scenario runs seamlessly on VR headsets such as Meta Quest 2 and Apple Vision Pro, mixed‑reality systems like HoloLens, desktop computers on Windows or macOS, Android and iOS mobile devices, and any modern web browser. Learners are in control of what to learn from the web and from the headset.

Validation of DT/AI Output

Testing encompassed a series of iterative validation cycles. The development team performed manual walkthroughs of every simulation sequence on both the VR headset and the browser build, verifying that each interaction triggered the correct response and logging any anomalies in our issue‑tracking system. Reported issues, such as misaligned colliders, unclear prompts, or unexpected behavior, were prioritized and addressed during rapid development sprints.

We conducted usability sessions with a small group of volunteers unfamiliar with semiconductor workflows. Observers documented navigation challenges, misinterpretations of instructions, and any signs of discomfort, capturing verbal feedback and screen recordings to inform targeted refinements. Based on this input, we clarified instructional text, adjusted control mappings, and enhanced visual cues to improve clarity and ease of use.

To ensure cross‑platform compatibility, the simulation was installed and tested on multiple VR headsets and desktop browsers. We confirmed that the system correctly detected each device, switched to the appropriate input scheme, and automatically adjusted graphical settings for a smooth experience.

A brief verification path on both VR and web versions confirmed that reported issues were resolved and that no new regressions had been introduced. This iterative testing approach delivered a stable, intuitive, and high‑fidelity training tool across all supported platforms.

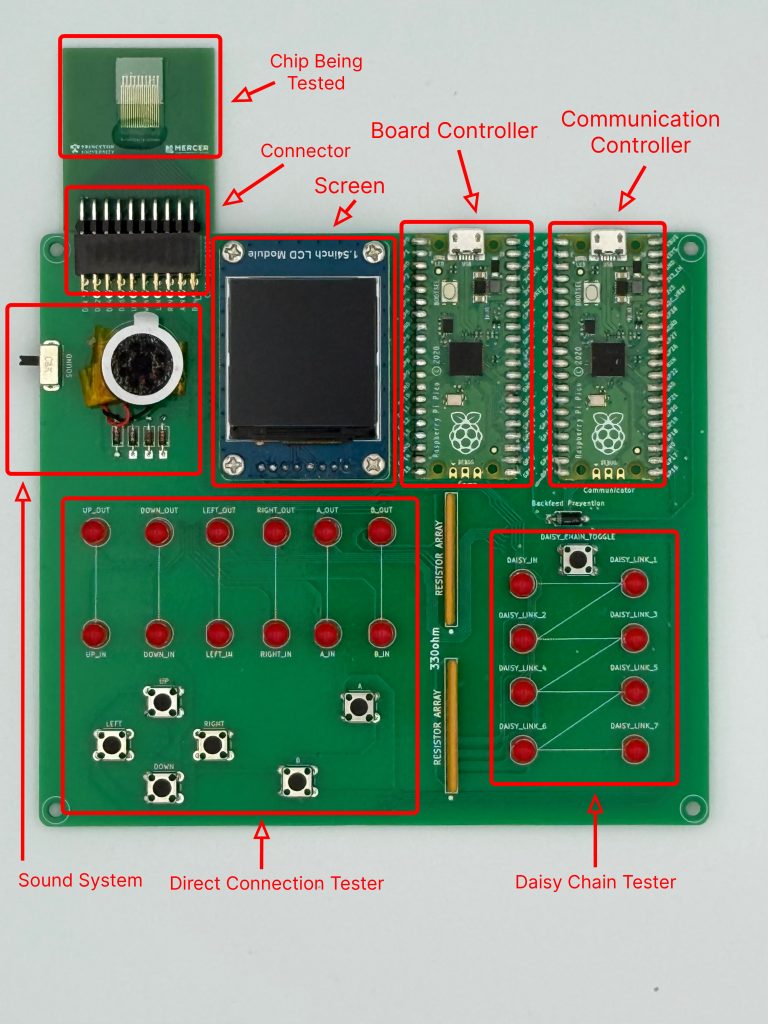

Validation of Physical Packaged Device Using a Testbed

The East-Coast Team, in parallel to DT/AI development had a companion physical hardware validation effort, where a test chip was designed, fabricated, and packaged by Princeton University, and validated using a custom-designed testbed consisting of a desktop software and a test board, as shown in Figure 8. The board is made using Raspberry Pi Pico microcontrollers to test connections between the silicon die and its expected packaging function. There are two distinct built-in test structures on the test chip. One is a set of direct pass-through connections that allow a signal to travel directly from one pin to another. The other is a connection of several daisy chained I/O nodes. These types of structures are used to check for any faults, defects, or disconnects in the wiring of the chip I/O.

For demonstration, to test for direct pass-through, the test board has physical buttons. Pressing a button sends a signal out on a corresponding signal line, and if the signal is echoed back, the line is deemed defect free. In the case of the daisy chain, the board contains LEDs that would light up when a test signal successfully passes through specified connecting nodes. The tests do not verify the entire chain at once but verify that each node in isolation can be addressed at its equivalent test point. The board may be run as a stand-alone for these tests. However, for more comprehensive and automated testing, the board can be plugged into a desktop application via USB. The application, written in Python with the PyQt6 framework, gives instructions to the board and runs each test step by step. The output is seen in real time on the computer, making the testing process faster, more consistent, and easier to reproduce. To aid in testing and validation, Princeton University fabricated two chip samples: a working chip and one which was intentionally faulty. This allowed us to double-check results and confirm that our system correctly identified and marked connectivity issues between the die and the package. The testbed successfully located the faculty pin connection and diagnosis showed an excessive bonding glue being the cause of the defect. Such physical hardware testing with a test chip, a testbed, and controller software is a necessary process step to identify and diagnose connectivity issues, if any, between the die and its package.

Results and Discussion

Notable features in this project include training two new contents, photolithography workflow and device packaging, and tightly coupled AI engines to customize learning for individual learners. Consistent with recent literature documenting the rapid expansion of AI in e-learning environments [14,15], this work explores how personalized AI guidance embedded within immersive virtual training influences learner engagement, perceived instructional value, and interest in continued use.

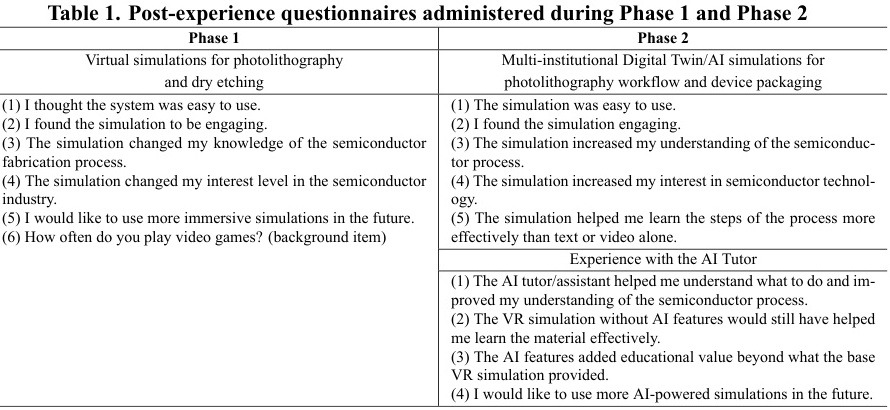

Survey Design and Measures

Learner feedback plays a central role in guiding iterative improvements to training content and instructional design. Building on previously published survey constructs for assessing learner satisfaction, motivation, perceived learning, and usability [16-18], the present study refined survey instruments to focus on aspects of learning experience, as shown in the table 1.

Data were collected using post-experience surveys administered immediately after participants completed a virtual reality (VR) semiconductor fabrication simulation. Two cohorts were analyzed: a 2024 cohort that experienced a prototype VR simulation and a 2025 cohort that experienced the enhanced digital twin with agentic tutoring capability. In both cohorts, the AI tutor provided contextual, on-demand guidance during the simulation, including procedural explanations and real-time assistance when participants encountered difficulty.

Across both cohorts, participants represented a mix of academic levels, including early-stage undergraduates, advanced undergraduates, and technical certificate-track students. Prior exposure to virtual reality technologies varied, with some participants reporting little to no prior VR experience and others reporting moderate familiarity. This diversity supports the applicability of the platform across institutions with differing levels of access to physical cleanroom facilities and advanced manufacturing infrastructure.

In the 2024 study, survey instruments consisted primarily of 5-point Likert-scale items assessing learner engagement, interest in future use of VR-based learning environments, and perceptions of instructional value. In the 2024 cohort, future interest was framed as general interest in using VR for learning, whereas in the 2025 cohort, future interest was framed more specifically as interest in microfabrication-relevant digital twin simulations. Additional items captured prior exposure to VR and related technologies. All responses were anonymized and analyzed in aggregate.

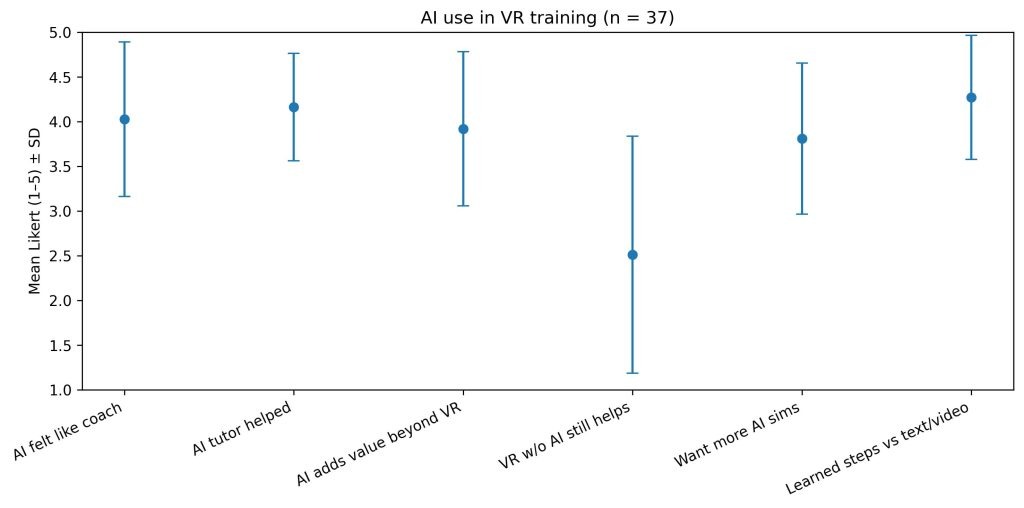

For the 2025 cohort (n = 37), survey items were grouped into conceptual categories reflecting: (1) perceived instructional support from the AI tutor (e.g., guidance, coaching behavior, and clarity of explanations), (2) perceived added value of AI beyond immersive visualization alone, (3) learner engagement and usability of the simulation experience, and (4) perceived learning outcomes, including conceptual understanding, procedural learning, and interest in semiconductor processes.

Data Analysis

Survey responses were analyzed to examine how learners perceived the instructional value of AI-assisted VR training and how these perceptions related to engagement, learning outcomes, and interest in continued use of AI-enhanced immersive environments. Likert-scale responses were summarized using descriptive statistics, with means and standard deviations used for visualization to highlight central tendencies and variability. Analyses emphasized relational patterns and explanatory trends rather than direct comparison of raw Likert magnitudes across cohorts. All statistical analyses and figure generation were performed using custom Python scripts developed for this study and made publicly available for reproducibility [19].

Figure 9 summarizes learner perceptions of AI-supported VR training in the 2025 cohort. Participants reported strong agreement that AI guidance functioned as an instructional coach, supported procedural understanding, and improved learning effectiveness beyond text- or video-based materials. In contrast, perceived usefulness of VR without AI support was substantially lower and more variable. Learners also expressed strong interest in additional simulations, indicating receptivity to continued adoption of AI-assisted immersive training.

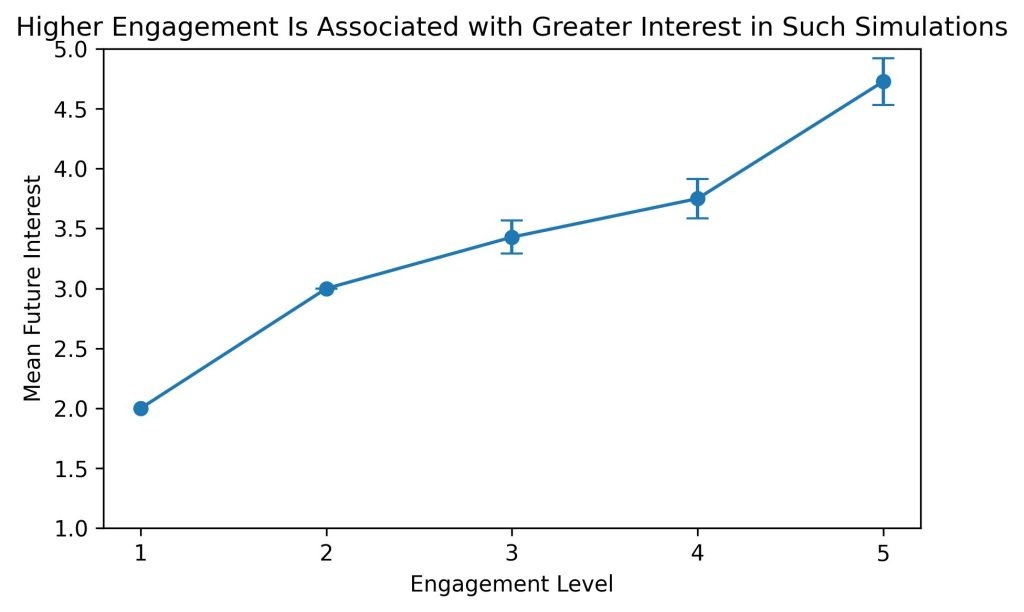

In prior work [3] conducted in 2024, learner engagement emerged as the strongest predictor of interest in future use of immersive training systems. The present study tests whether this relationship persists within an AI-enhanced VR environment.

As shown in Figure 10, mean interest in future AI-powered VR simulations increased monotonically with self-reported engagement level in the 2025 cohort. Learners reporting higher engagement expressed substantially greater interest in continued use than those reporting lower engagement. Regression analysis confirmed a strong positive association between engagement and future interest, explaining a substantial proportion of variance in adoption intent (R² ≈ 0.61–0.67, n = 37, p < 0.01). These results identify engagement as a key predictor of learner willingness to adopt AI-enhanced immersive instructional systems.

Qualitative Feedback

Qualitative comments from the 2025 cohort aligned with these quantitative trends. Participants frequently described AI guidance as helpful for procedural understanding and real-time clarification, while suggestions for improvement focused on increased interactivity and more natural AI voice delivery. These responses reinforce the conclusion that instructional quality and learner engagement jointly mediate interest in AI-enhanced immersive training.

Limitations

This study has several limitations. Survey instruments differed slightly between cohorts, particularly in the framing of future interest, which precludes direct comparison of raw Likert values across years. Engagement and interest were measured using self-report instruments rather than behavioral metrics. In addition, sample sizes within some engagement categories were small, and results should be interpreted in terms of relational trends rather than precise point estimates.

Cost Benefit Analysis on Transfer of Training (ToT)

Effectiveness in Transfer of Training (ToT) is a central concern in industrial and organizational psychology, as it determines whether knowledge, skills, and abilities acquired during instruction are successfully applied in real-world settings [20]. Transfer of training is commonly conceptualized as involving three stages: development of training content, acquisition and retention of knowledge, skills, and abilities (KSAs), and the generalization and maintenance of those KSAs in the workplace [21].

The present bi-coastal project primarily addresses the first two stages of this framework. Within the context of contemporary interactive simulation technologies, our results demonstrate that developing high-fidelity virtual simulations for semiconductor training can be achieved at comparatively low cost and with reduced access barriers relative to physical cleanroom instruction. Moreover, coupling immersive simulation with AI-based tutoring and conversational guidance supports self-directed learning and strengthens KSA development during the training phase. As noted by Blume et al. [22], “the challenge is not how to build a bigger and more influential transfer support system; it is how to make transfer a more integral part of the existing organizational climate.”

The current scope of this study is limited to university educational laboratories as content sources and post-secondary students as trainees. Future work would benefit from the involvement of an industry advisory board to guide development, evaluate realism, and provide application-driven feedback. While application-specific cost–benefit data from the semiconductor industry were not available, prior VR training studies in other industrial domains report improvements of up to 75% in KSA retention and comparable reductions in training time relative to traditional methods [23,24].

Summary of Results

Figure 12 shows the DT/AI outcomes developed at the two partner institutions, UC Irvine and Princeton/MCCC. The UC Irvine team focused on cleanroom and lithography workflows, while the Princeton/MCCC team emphasized packaging processes and chip-board I/O connectivity testing.

Pilot participants, including undergraduates and community college students, showed gains in confidence and task fluency after using the DT/AI modules. Learners who had no prior exposure to semiconductor tools were able to complete multi-step procedures, such as wafer alignment or flip-chip bonding, within the simulation. Feedback highlighted that real-time hints from the AI tutor reduced frustration and encouraged persistence, while the branching scenario flows kept learners engaged.

Iterative pilot sessions revealed clear patterns of improvement. Early users often struggled with navigation and interpretation of prompts, but refinements, such as simplifying instructional text and enhancing visual cues, shortened task completion times and reduced navigation errors in later sessions.

The integration of AI tutors and copilots added unique benefits beyond conventional simulation training. Learners described the AI agents as “like having a coach in the room,” noting that they provided reassurance and immediate redirection.

The findings suggest that DT/AI modules are effective for preparing learners before they enter cleanrooms or packaging labs, ensuring that costly equipment time is used more efficiently. By building familiarity with process flows and tool interfaces virtually, students can arrive at physical labs with baseline competencies, reducing instructor workload and minimizing errors on sensitive systems.

Conclusion

This bi-coastal collaboration demonstrates the feasibility and scalability of a Digital Twin and AI (DT/AI) training framework spanning both front-end semiconductor fabrication and back-end device packaging. By integrating immersive digital twins with agentic AI tutors, the platform enables learners to practice complex procedures safely, repeatedly, and accessibly without the constraints of physical cleanroom availability, while accelerating DT/AI development through effective cross-institutional collaboration.

Standardized development guidelines, shared asset libraries, and no-code authoring workflows allowed student teams from diverse institutions to contribute efficiently while maintaining technical fidelity. Cross-platform deployment validated that the same instructional scenarios function consistently across VR headsets and web browsers, expanding accessibility. Iterative refinements based on user observations improved task flow and usability, resulting in an intuitive learning experience across learner backgrounds.

Originally focused on front-end photolithography at UC Irvine, the platform was expanded to include advanced packaging workflows developed with Princeton partners, enabling coverage of both fabrication and assembly stages. The inclusion of physical testbed validation further reinforces the importance of post-production inspection and diagnostics. Collectively, these outcomes support the DT/AI framework as a scalable, cost-effective, and broadly applicable model for immersive semiconductor workforce training.

Lessons Learned and Future Work

Lessons learned from this bi-coastal DT/AI implementation indicate that effective workforce training benefits from a multi-modal approach combining conventional text, instructional videos, and immersive DT/AI-based interactions. DT/AI is particularly valuable for teaching spatial, procedural, and safety-critical tasks that are difficult to convey through traditional media alone. Organizing DT/AI content using textbook-style structure, supported by embedded videos, captions, transcripts, and contextual pop-up guidance, improved clarity and learner navigation across modules.

While this study expanded participation across institutions, broader and more diverse learner populations would enable stronger generalization of results. Future work will focus on expanding DT/AI coverage to additional fabrication and packaging processes, incorporating AI-driven personalization and adaptive feedback, and scaling collaboration with other semiconductor facilities to grow a shared national DT/AI training library supporting long-term skill acquisition, retention, and transfer.

Acknowledgments. The authors would like to extend their gratitude to Professor G.P. Li, Marc Palazzo, and C.Y. Lee at the University of California, Irvine, for their guidance and access to the INRF research labs, and Mr. Bert Harrop and Dr. Alex Norman of Princeton University for their tutorials on advanced device packaging and access to the Advanced Device Package Lab at Princeton’s Micro Nano Fabrication Center. We also thank SimInsights Inc. for granting access to their tools and mentorship. A special thanks to Professor Jared Ashcroft at Pasadena City College for expanding and supporting this integrated DT/AI effort from one coast to the other. Finally, we acknowledge the funding support from the National Science Foundation Advanced Technological Education (ATE) Micro Nano Technology Education Center.

Disclosures. The authors declare no conflicts of interest.

[1] Semiconductor Industry Association. “America faces a significant shortage of tech workers in the semiconductor industry and throughout the U.S. economy,”. [Online]. Available: https://www.semiconductors.org

[2] I. Jha et al., “AI-Powered VR Simulations for Semiconductor Industry Training and Education,” Journal of Advanced Technological Education, vol. 4, no. 1, Feb. 2025, doi: 10.5281/zenodo.14933891.

[3] “The Role of Digital Twin in Manufacturing,” Siemens, 2020. Accessed: Apr. 1, 2025. [Online]. Available: siemens.com/us/en/company/topic-areas/advanced-manufacturing/digital-twin-in-advanced-manufacturing

[4] R. Giachetti. “Digital Engineering.” Systems Engineering Body of Knowledge (SEBoK). Accessed: Apr. 1, 2025. [Online]. Available: https://sebokwiki.org/wiki/Digital_Engineering

[5] A. Agrawal et. al., “Digital Twin: Where do humans fit in?” Automation in Construction [Online] Available: arxiv.org/pdf/2301.03040.

[6] “Integrated Nanosystems Research Facility (INRF),” UCI Research Shared Facilities, Univ. California, Irvine. Accessed: Mar. 5, 2024. [Online]. Available: https://research.uci.edu/shared-facility/integrated-nanosystems-research-facility-inrf/

[7] “Advanced Micro Assembly and Packaging,” Princeton Materials Inst., Princeton Univ. Accessed: Feb. 3, 2025. [Online]. Available: https://materials.princeton.edu/core-facilities/advanced-micro-assembly-packaging

[8] SimInsights. HyperSkill User’s Guide. (2025). Accessed: Mar. 30, 2025. [Online]. Available: https://docs.siminsights.com/

[9] Blender 4.4.3. (2025). Blender Foundation. Accessed: Apr. 3, 2025. [Online]. Available: https://www.blender.org/

[10] Unity 6.1. (2025). Unity Technologies. Accessed: Apr. 3, 2025. [Online]. Available: https://unity.com/

[11] Amazon Web Services S3 (Simple Storage Service). (2025). Amazon Web Services. Accessed: Apr. 5, 2025. [Online]. Available: https://aws.amazon.com/s3/

[12] OpenAI. (2025). OpenAI. Accessed: May 28, 2025. [Online]. Available: https://openai.com/

[13] “HyperSkill,” SimInsights. Accessed: Mar. 30, 2025. [Online]. Available: https://www.siminsights.com/hyperskill/

[14] N. Kshetri, “Revolutionizing Higher Education: The Impact of Artificial Intelligence Agents and Agentic Artificial Intelligence on Teaching and Operations,” IT Professional, vol. 27, no. 2, pp. 12–16, Mar./Apr. 2025.

[15] D. Burgos, (ed.), Radical Solutions and eLearning. Lecture Notes in Educational Technology. Springer, Singapore, 2020.

[16] A. Y. Kondratev and A. G. Krokhin, “The Use of Artificial Intelligence in E-Learning Using the Example of the Deeptalk Software Product,” 2024 7th International Conference on Information Technologies in Engineering Education (Inforino), 2024, pp. 1-4, doi: 10.1109/Inforino60363.2024.10551989.

[17] Srinivasa, A. et. al, “Virtual reality and its role in improving student knowledge, self- efficacy, and attitude in the materials testing laboratory.” International Journal of Mechanical Engineering Education. 2020.

[18] J.R. Lewis. “The system usability scale: Past, present, and future.” International Journal of Human–Computer Interaction, 2018.

[19] K. Hong. “VR_Sim_Analysis_2025.” https://github.com/KHONG707/VR_Sim_Analysis_2025.

[20] H. O’Brien et al, “A practical approach to measuring user engagement with the refined user engagement scale (UES) and new UES short form.” International Journal of Human-Computer Studies, 2018.

[21] J. K. Ford, “Transfer of Training,” Encyclopedia of Industrial and Organizational Psychology. 2007 [Online] Available: 10.4135/9781412952651.n320.

[22] B. D. Blume, J. K. Ford, T. T. Baldwin, and J. L. Huang, “Transfer of Training: A Meta-Analytic Review,” Journal of Management, vol. 36, no. 4, pp. 1065–1105, 2010, doi: 10.1177/0149206309352880.

[23] T. Fell, “VR training cost analysis and ROI worth the investment,” Immersive Learning News. Accessed: Oct. 27, 2024. [Online]. Available: immersivelearning.news/2024/11/01/vr-training-cost-analysis-and-roi-worth-the-investment

[24] R. Cerga, “Cost analysis of XR training vs traditional,” VR Vision Group. Accessed: Aug. 6, 2025. [Online]. Available: vrvisiongroup.com/cost-analysis-of-xr-training-vs-traditional/